By Davis Mandela

Davis Mandela is an AI specialist and linguist focusing on digital policy and media ethics in East Africa

Last month in a quiet estate in Kitengela, a mother opened her 14-year-old daughter’s phone and froze. There was her child’s face — smiling the same smile she knew so well — but on a body that was never hers. The image was circulating in a school WhatsApp group. The girl had done nothing wrong. Someone had simply fed her innocent class photo into a free AI “nudify” tool.

The mother told me later, voice shaking: ‘It felt like someone had reached into our home and taken her.’

That story is no longer rare in Kenya. It is happening right now.

The UNICEF Warning That Should Wake Us All

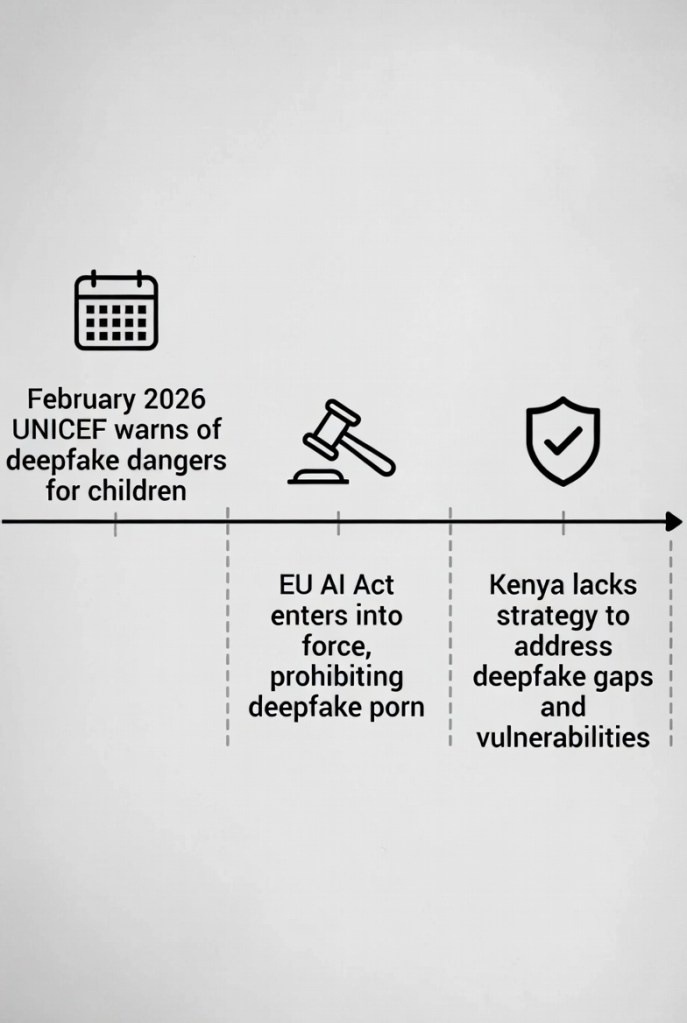

In February 2026, UNICEF released a chilling report: across 11 countries studied, at least 1.2 million children — including thousands here in Kenya and East Africa — had their images turned into explicit deepfakes in the past year alone. In some places, that’s one child in every classroom.

Read the full UNICEF press release here: “Deepfake abuse is abuse” – Statement on AI-generated sexualised images of children (4 February 2026).

The tools are terrifyingly simple. Anyone with a smartphone can take a girl’s photo from Instagram, Facebook, or even a school ID and generate explicit images that look completely real. The apps are free or cost less than a soda. No technical skill needed.

The Fear Is Already Here

From where I sit in Nairobi, talking to parents and teenagers, the fear is palpable. Girls are deleting every photo of themselves. Boys are learning that power now comes from an app. Teachers report students refusing to appear in class pictures. Families are whispering about it because shame still silences so many.

Kenya’s National AI Strategy 2025–2030 speaks beautifully about ethics, protection, and digital inclusion. Yet while we write strategies, the deepfakes are spreading faster than any law can catch them.

The UNICEF report is clear: “Deepfake abuse is abuse.” There is nothing fake about the shame, the bullying, the long-term trauma these children carry. In some countries in the study, up to two-thirds of young people already worry that AI could be used to create fake sexual images of them.

Who Is Protecting Them?

I keep thinking about the mother in Kitengela. She asked me a question I still don’t have a good answer for: “When my daughter’s face is stolen and put on someone else’s body, who is protecting her?”

The global conversation has started — the EU AI Act now bans certain manipulative deepfakes, and the International AI Safety Report 2026 flags this exact risk. But here in Kenya and across much of East Africa, the rules remain voluntary or non-existent for everyday platforms.

What We Can Do — Starting Today

We don’t have to wait for perfect laws. Parents, schools, and tech companies can act now:

- Educate children about “nudify” tools and how to spot deepfakes

- Push platforms (WhatsApp, TikTok, Instagram) to detect and remove deepfakes faster

- Support the young people already living with the consequences — through counselling, safe reporting channels, and community conversations

Because the goal isn’t to fear technology. It’s to make sure technology never gets to violate our children while we look the other way.

The mother in Kitengela eventually sat her daughter down and they deleted every public photo together. They still use WhatsApp. They still take pictures. But now they do it with eyes wide open.

Call to Action If you’re a parent, teacher, or young person in Kenya, tell me one thing below: “Scared” “Angry” “Ready to act”

Share this piece with someone who needs to see it. Let’s stop pretending this isn’t already happening in our homes and schools. Because in 2026, protecting our children’s faces might be the most urgent thing we do.

References

- UNICEF Press Release: “‘Deepfake abuse is abuse’ – Statement on AI-generated sexualised images of children”, 4 February 2026.

- UNICEF Issue Brief: “Artificial Intelligence and Child Sexual Abuse and Exploitation”, February 2026.

- UN News: “‘Deepfake abuse is abuse,’ UNICEF warns”, 4 February 2026.

- Kenya National AI Strategy 2025–2030 (Implementation Roadmap), Ministry of Information, Communications and the Digital Economy.

- International AI Safety Report 2026 (deepfake and emotion-influencing risks section).

Leave a comment